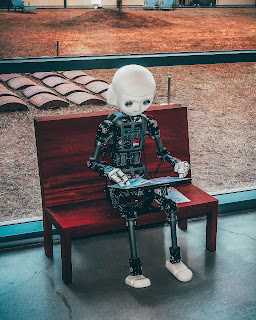

Photo by Andrea De Santis on Unsplash

Lately, AI has been the buzzword, well, buzz acronym in conversations around enterprise communications. What is AI and how does it relate to communications? For the purpose of this article we will refer to AI as the ability to generate correct transcriptions from meeting attendees. This is how I would test meeting services to determine accuracy and benchmark them against one another. Note that I am an English speaker and while my test would primarily involve the English language, it could certainly port to any language supported by meeting services.

The goal of a meeting service transcription feature is to be 100% accurate. The likelihood that it can be 100% accurate in perfect conditions is high, but when does a meeting have perfect conditions? Simply testing in perfect conditions seems pointless, so we need to overkill it just a bit.

Setup

We would start with a 50 word script of general, unspecific, content spoken in a normal tone and speed. Two voiceover artists, one male and one female would each record the phrase to a digital file. These are your base files.

A point to point call would be initiated in the meeting service to be tested. One base file would be placed in Audacity (or equal software program) and using VB audio virtual cable as the microphone source for the meeting service and played on a loop. Audio streams would be sent to the remote end point (both end points would be in the same lab, but each connected to a separate network) and recorded from the meeting service itself. Once the test is finished, a review of the recording is performed, noting any errors. With a 50-word phrase, each error is two points off giving maximum score of 100 for 100% accuracy. We would do this several times, likely several dozen time to achieve a baseline score for each meeting service we test.

Then we apply this logic under ever increasing levels of difficulty.

Proper names

A script would be created with proper names sprinkled in and retested. A separate test could involve the names of the meting attendees to see if there is any change of transcription spelling. For example, would Bryan be spelled the correct way if I was in the meeting.

Technical jargon

A script would be created using industry jargon and acronyms and retested.

Variable speakers

The next test would be the original script with several variables including rushed speech, sprinkling of "Ums" breaking the conversation and high and low pitch voice tests.

Noise cancellation and other settings

The original file would be used for testing all audio settings in a meeting service. Does increasing the noise cancellation to maximum affect the score? Here is where we would find out.

Noise

Speaking of noise, we would use the base file and overdub noises onto it and retest. From here we would also test all noise cancellation levels. Noises could include HVAC/brown noise, phones ringing, dogs barking, road noise, etc.

Distance to microphone

For this test, the base file would be played in a meeting space at various locations in the room to see if distance to the microphone has any affect on accuracy. This could also involve testing different microphones and mic arrays to see if hardware makes a difference.

Network conditions

The base file would be recorded while we introduce various network conditions. Does introducing packet loss mess up the transcription or is the "AI" so advanced it fills in the gaps?

Over talk

A file would be recorded with two people have a back and forth conversation. Does the AI distinguish between speakers? How does the meeting service handle two people overlapping each other in conversation?

Accents and Language

This is where it can get out of control by testing not only a variety of English accents, but switching languages in the middle of the conversation.

So to wrap up, any vendor bragging about "XX%" accuracy in transcription must be taken with a grain of salt. We need to know their test methodology and how deep do they dive into it because as mentioned before, testing in perfect conditions has little to do with a real world experience.

Bryan

No comments:

Post a Comment